Telling unbelievers

belief is a choice

By: Mike Gashler

Theists often take the position that belief is a choice. Atheists usually disagree. Who is right? Let's analyze this...

Whenever two people become adamant about the "true" definition of a term, it is a very safe bet that both of them are trying to use the term to make valid points, and neither of them feel their point has been sufficiently acknowledged. Why is this such a safe bet? Because terms don't have "true" definitions. Terms are not some kind of natural phenomena. They are man-made vehicles for communicating ideas.

So let's try to understand why both perspectives are valid...

Why belief is a choice

Suppose a certain father believes in disciplining his children. After a particularly violent spanking, his children start to exhibit signs of trauma. Someone calls Child Protective Services. They come to take away his children. They tell him he must stop abusing his children. And he responds, "Sorry, I just believe in spanking my children. There's nothing I can do about that. One cannot choose what he believes."

That would be pretty pathetic, right? Of course he could choose what he believes! Obviously, beliefs are a choice!

Why belief is not a choice

Suppose a certain father is hiking with his children. Suddenly, a bear emerges from the woods. The father becomes fearful for the safety of his family. But then he thinks, "This fear is just a product of my belief that there is a bear." So, the father closes his eyes and proceeds to mentally persuade himself there is no bear. When he no longer believes in the bear, he no longer fears for his family.

That would be pretty hard to pull of, wouldn't it? And even if this father actually could make himself stop believing in the bear, what a useless and cowardly response! So obviously, beliefs are not a matter of choice!

Why both points are actually valid

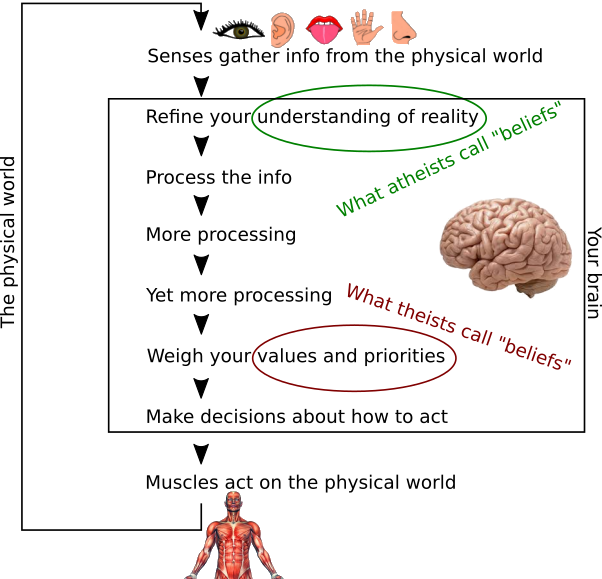

Those two examples used the term "beliefs" to describe two completely different concepts. In the spanking example, beliefs referred to one's values and priorities. In the bear example, beliefs referred to one's understanding of reality. Both of these concepts occur in the mind, but they occur at different points in the thinking process:

When we recognize that the two parties are using the term "beliefs" to refer to two completely separate concepts, it finally becomes possible to sort out the misunderstanding. Basically, here's what has been happening:

|

What theists say: Belief is a choice.  What happens in the atheist's mind: What they hear: It's your fault God does not exist in your Universe. If you would only work harder to lie to yourself, you could change what is real using the power of your mind! Being honesty with yourself is bad. You should just make yourself believe! What they think: Is this person stupid? You can't make a car go faster by modifying the speedometer! You can't make a room warmer by adding mercury to the thermometer! Beliefs are not wishes! They are supposed to be true! How they respond: No, beliefs are what you are convinced is true. And I find your argument unconvincing. |

What atheists say: Belief is not a choice.  What happens in the theist's mind: What they hear: I am a robotic atomaton. My choices are determined by my genetics and my environment. I take no responsibility for any decisions because there is nothing I can do about them. Nothing is ever my fault! What they think: What a pansy attempt to justify unrighteous behavior! If you don't control your priorities, who does? If everything you do is forced upon you, then what is the purpose of even living? Why not just go die then? How they respond: No, beliefs are in your mind. And what is in your mind is yours to control. |

How to straighten this all out

If you are imagining some sort of fantasy in which you clarify your definition, and your opponent responds by saying, "Ohhh, thaaat's what you were saying! Now I see that you are right. I will abandon my position and and adopt yours.", then I'm sorry to inform you that's just not going to happen. Clarifying terms is not the solution.

And if you imagine that you can just keep using the same term, and your listener will do all the work of divining which definition you are intending, I'm afraid that's probably not going to work out very well either. You are just going to have to be content with expressing your point, but yielding the term. Sorry. But hey, expressing your message is what you really wanted anyway, right?

In my opinion, the best way to avoid confusion from ambiguous terms is to avoid using ambiguous terms. So instead of saying, "beliefs are a choice", just say, "you control your priorities and values". And instead of saying, "beliefs are what you are convinced of", just say, "being convinced is what changes your understanding of reality". How are you going to persuade the other person to accept your position? Chances are you won't have to. Chances are the other person already agrees, as long as you say it in a non-ambiguous way.

A second perspective in which beliefs are a choice

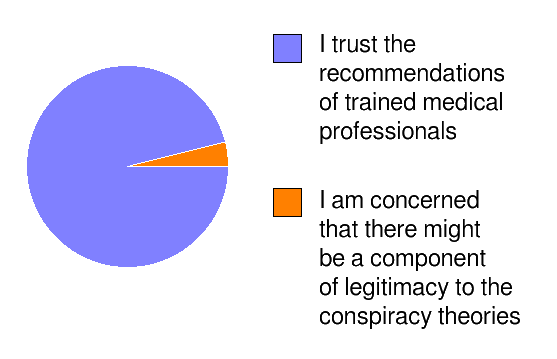

...but wait! We're not done. There is another perspective in which beliefs can be a choice. We all live in a world in which we must make decisions without knowing all the answers for sure. For example, suppose a certain parent mostly believes that vaccines are safe.

When the time comes to vaccinate his children, this parent cannot just sit around being crippled by his uncertainty. Doing nothing in this case would be the same as acting on the lesser sliver of his conflicted beliefs! (...which would be stupid.)

So in order to act intelligently, this parent temporarily suspends his uncertainty and vaccinates his children. Essentially, he makes a choice to act as if he fully believes, even though he only mostly believes. Does that mean he made a choice to believe? It might. To answer that question, we need to examine what happens to his beliefs after the moment of choice. Consider two possibilities:

- He could now say he is 100% certain that vaccines are safe.

- Or he could return to his former state of uncertainty.

On the one hand, there's not much value in worrying about whether he made the right choice. After all, there is no available mechanism to unvaccinate his children if he changes his mind. And admitting there is some possibility he made a mistake is not very fun. On the other hand, no new knowledge was gained, so what basis could he have for changing his beliefs? There's really no basis except for his own choice. And what if his friends ask him for advice? Should he now say, "I am 100% certain that vaccines are safe"? Something about doing that doesn't seem very forthright, does it?

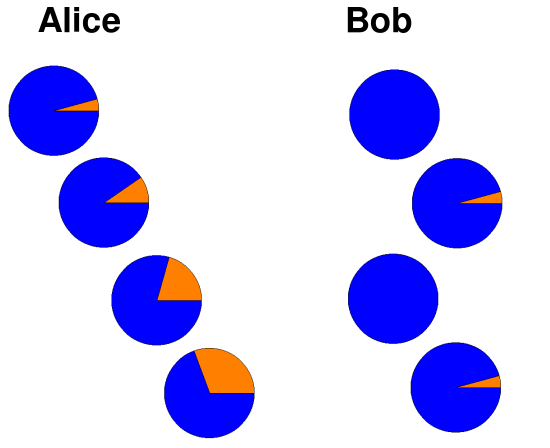

Now, for completeness, let's examine what happens when people make a choice to believe something that turns out to be wrong. Let's say Alice and Bob have reasons to suppose with 90% certainty that the Earth is flat. After all, it certainly looks flat. And some people are quite adamant that it indeed is flat. Alice says, "the matter is out of my hands", and sticks with 90% certainty. Bob believes in choosing his beliefs, so he banishes his uncertainty and chooses to become 100% certain that the Earth is flat.

Well, they're both wrong. What differences does it really make that Bob is a tiny bit more confident in his error? Suppose Cindy comes along and describes how Focault pendulums would not behave the way they do on a flat Earth. It's a nice piece of evidence, but Cindy is also known to overstate her position. So Alice adjusts her confidence that the Earth is flat to 80%, and Bob adjusts his to 90%. ...except Bob doesn't like being in a state of uncertainty, so he eventually decides again that the Earth is definitely flat.

Then Dave comes along. He points out that the shadow of the Earth is round during lunar eclipses, even though lunar eclipses have occurred at different times during the day. But Dave is not convincing enough to change the minds of Alice or Bob either. So Alice adjusts her certainty down to 70%, and Bob temporarily brings his certainty back down to 90%.

Do you see where this is going? Alice is learning. Bob is not. When Emily, Frank, Gina, and Hal each share their evidence, Alice's knowledge grows. Bob's does not. Eventually--hopefully before Zach comes along--Alice will cross the 50% line, and will realize that she believes the Earth is round. She may never be absolutely certain, but at least she can learn. Bob can never learn. He will die insisting that he knows the Earth to be flat. Why? Because his beliefs are a choice.

In the real world, evidence rarely comes in truck loads of overwhelmingly clear messages. Most knowledge is gained line upon line--here a little and there a little. Thus, the tendency to choose one's beliefs has the effect of rendering a person numb to the primary mechanism by which learning occurs.

All right, now let's turn this story around one last time. So what if Alice and Bob originally believed the Earth was round (as it is), and all those other people were trying to mislead them into thinking it was flat. Then, Bob's choice will protect him from being deceived, right?

Well yeah ...if truth were some kind of delicate thing that fell apart whenever someone disagreed with it, and if Alice and Bob were swayed by every argument they ever heard. But these are false assumptions. Alice was not left defenseless against deception. Alice and Bob don't have to accept every opinion they hear. They are allowed to use their brains. And truth is not some delicate thing that falls apart whenever someone disagrees.

Note that there are (at least) two ways Alice and Bob can defend their positions:

- "I am not yet sufficiently convinced your claims are factually accurate, but I value learning, so I would like to investigate the matter further."

- "I don't care whether or not you are right, your message is inconsistent with what I choose to believe, so I will no longer entertain your ideas."

Of course, the first defensive mechanism has a place. It is very often appropriate. But what about the second? Can our choices ever be more important than what is true?

In my opinion, telling ourselves our choices protect us from being deceived is probably more of a mechanism we use to comfort ourselves when learning is scary or painful. It may maximize the number of back pats we get from our peers. But it doesn't do anything to connect us to truth. It just snaps our beliefs the nearest position of certainty. By contrast, refining our beliefs when new information comes along may be hard, but it is the only way to open the door for more knowledge to come in.

Choices should be good. Beliefs should be true. I don't think these two principles ever conflict. I think we should be careful to only make choices for matters of good/bad. For matters of fact/fiction, choice has no place. That's when we need to exercise humility.

Conclusions

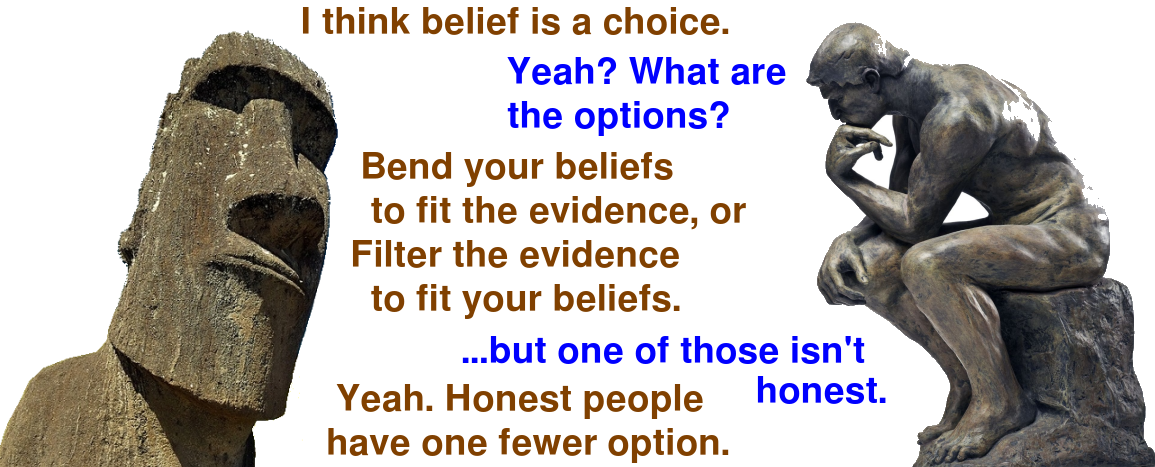

Our priorities and values are most certainly choices. We also have the power to choose how we understand reality. But is it moral to choose how we understand reality? I don't think so. Doing this seems dishonest because it fabricates certainty without knowledge. And it is counter-productive because it sabotages learning.